Documentation Index

Fetch the complete documentation index at: https://mintlify.com/ByteByteGoHq/system-design-101/llms.txt

Use this file to discover all available pages before exploring further.

Overview

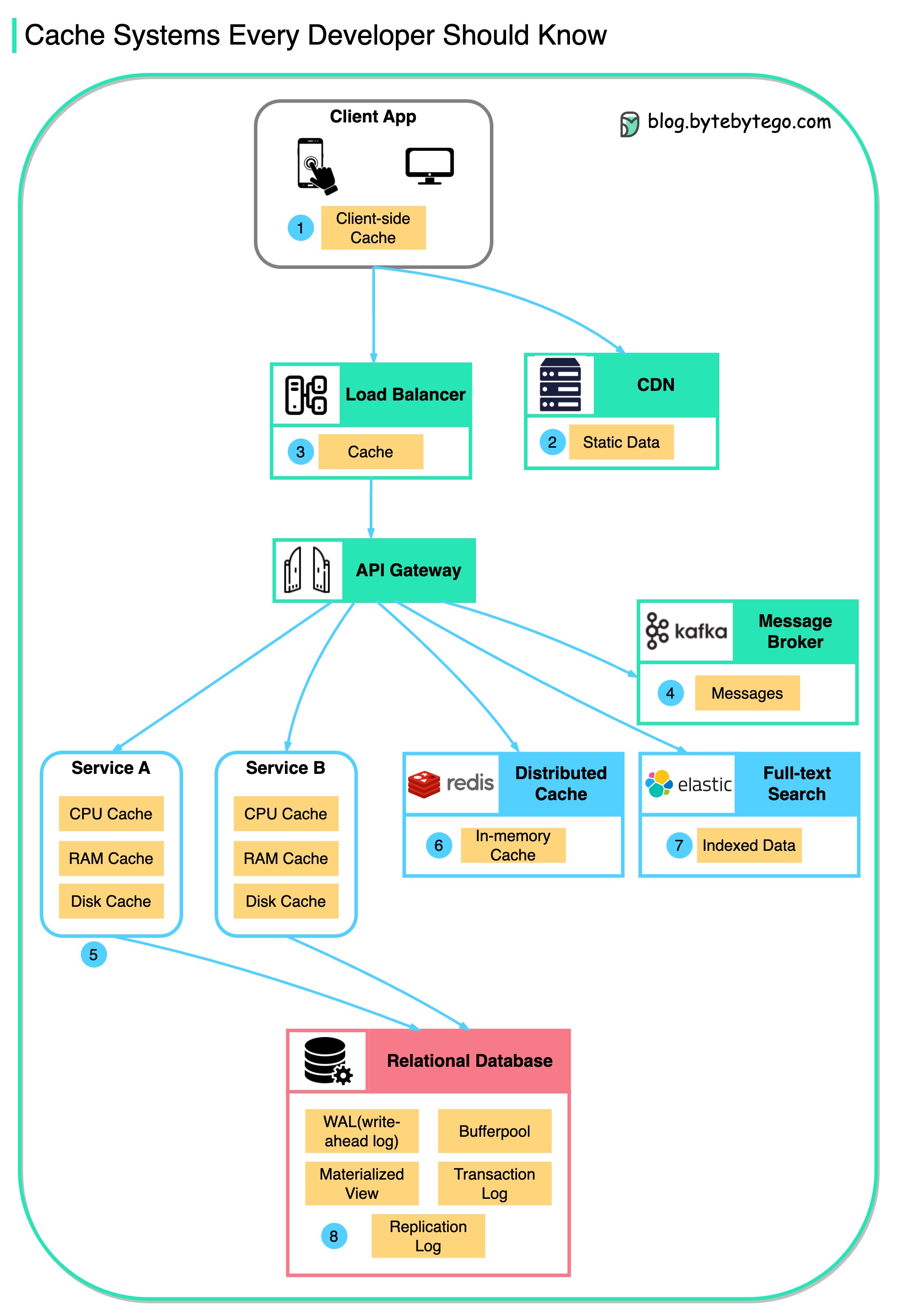

Caching is a technique that stores a copy of a given resource and serves it back when requested. When a web server renders a web page, it stores the result of the page rendering in a cache. The next time the web page is requested, the server serves the cached page without re-rendering the page. This process reduces the time needed to generate the web page and reduces the load on the server.Cache Systems Every Developer Should Know

Data is cached everywhere, from the client-facing side to backend systems. Let’s explore the many caching layers:

Data is cached everywhere, from the client-facing side to backend systems. Let’s explore the many caching layers:

1. Client Apps (Browser Cache)

- Browsers cache HTTP responses locally

- Server responses include caching directives in headers

- Upon subsequent requests, browsers may serve cached data if still fresh

- Reduces bandwidth and improves load times

2. Content Delivery Networks (CDN)

- Cache static content like images, stylesheets, and JavaScript files

- Serve cached content from locations closer to users

- Dramatically reduce latency and load times

- Handle traffic spikes effectively

3. Load Balancers

- Some load balancers cache frequently requested data

- Serve responses without engaging backend servers

- Reduce load and response times

- Act as intelligent traffic managers

4. Message Brokers

- Systems like Kafka cache messages on disk per retention policy

- Consumers pull messages according to their own schedule

- Provide replay capability for event streaming

5. Application Services

- Services employ in-memory caching to improve data retrieval speeds

- Check cache before querying databases

- May use disk caching for larger datasets

- Common patterns: cache-aside, write-through, write-behind

6. Distributed Caches

- Systems like Redis and Memcached cache key-value pairs across services

- Provide faster read/write capabilities than traditional databases

- Support advanced data structures (lists, sets, sorted sets)

- Enable sharing cached data across multiple application instances

7. Full-Text Search Engines

- Platforms like Elasticsearch index data for efficient text search

- The index is effectively a form of cache

- Optimized for quick text search retrieval

- Support complex queries and aggregations

8. Database Caching

Databases have specialized mechanisms to enhance performance:- Buffer Pool: Cache within the database that holds copies of data pages

- Materialized Views: Store precomputed results of expensive queries

- Query Result Cache: Cache results of identical queries

Top Caching Strategies

Cache-Aside (Lazy Loading)

Cons: Cache misses are expensive, potential for stale data

Read-Through

Cons: Initial requests are slow, all data goes through cache

Write-Through

Cons: Higher latency on writes, unnecessary writes if data rarely read

Write-Behind (Write-Back)

Cons: Risk of data loss, more complex implementation

Refresh-Ahead

Cons: Difficult to predict access patterns, wasted resources on wrong predictions

Cache Eviction Policies

When cache is full, which items should be removed?LRU (Least Recently Used)

- Evicts items that haven’t been accessed recently

- Most commonly used policy

- Good balance between simplicity and effectiveness

LFU (Least Frequently Used)

- Evicts items accessed least often

- Better for long-term access patterns

- More complex to implement

FIFO (First In, First Out)

- Evicts oldest items first

- Simple but may remove frequently used old items

- Good for time-based data

TTL (Time To Live)

- Items expire after specified duration

- Good for data that becomes stale

- Often combined with other policies

Random

- Randomly select items for eviction

- Simple and fast

- Surprisingly effective in some scenarios

Cache Invalidation Challenges

Strategies for Cache Invalidation

- TTL-Based: Set expiration times on cached data

- Event-Based: Invalidate when underlying data changes

- Manual: Application explicitly invalidates cache entries

- Version-Based: Include version in cache key

Common Cache Problems

Cache Stampede

Problem: Many requests for expired cache key hit database simultaneously Solutions:- Use probabilistic early expiration

- Implement request coalescing

- Use locks to allow only one request to refresh

Cache Penetration

Problem: Requests for non-existent data bypass cache Solutions:- Cache null results with short TTL

- Use Bloom filters to check existence

- Validate requests before checking cache

Cache Avalanche

Problem: Many cache keys expire simultaneously Solutions:- Randomize TTL values

- Use cache warming strategies

- Implement hierarchical caching

Performance Optimization Tips

Monitor Cache Hit Ratio

Track hit ratio to ensure cache is effective. Aim for >80% hit rate.

Right-Size Your Cache

Balance memory usage with hit rate. More isn’t always better.

Use Appropriate TTLs

Set TTLs based on data change frequency and staleness tolerance.

Cache Serialization

Choose efficient serialization formats (Protocol Buffers, MessagePack).

Redis: The Swiss Army Knife of Caching

Redis is more than just a cache:- Data Structures: Strings, hashes, lists, sets, sorted sets, bitmaps

- Persistence Options: RDB snapshots, AOF logs

- High Availability: Redis Sentinel for monitoring and failover

- Scalability: Redis Cluster for horizontal scaling

- Use Cases: Caching, session storage, real-time analytics, message queuing

Related Guides

Explore more about caching:- Cache Systems Every Developer Should Know

- Top 5 Caching Strategies

- Cache Eviction Policies

- How Cache Systems Can Go Wrong

- Netflix’s Caching Strategy

- Redis 101

- Things to Consider When Using Cache

Effective caching can dramatically improve application performance, but it introduces complexity. Always measure and monitor to ensure your caching strategy delivers value.